MCP Is the TCP/IP of Agentic AI:And Your Architecture Needs to Know It

The Model Context Protocol has crossed 97 million installs and every major AI provider now ships MCP-compatible tooling. Engineering leaders who ignore this shift are building on sand.

MCP Is the TCP/IP of Agentic AI — And Your Architecture Needs to Know It

There's a moment when a protocol stops being a proposal and starts being infrastructure. For HTTP it was the mid-90s. For REST it was roughly 2010. For the Model Context Protocol (MCP), that moment is happening right now — and most engineering organizations are still treating it like an experiment.

At Kuaray, we spend a significant portion of our advisory work helping engineering leaders distinguish between AI hype and AI infrastructure. MCP has decisively crossed that line. With 97 million installs as of March 2026 and every major AI provider — OpenAI, Anthropic, Google DeepMind, and Meta — shipping MCP-compatible tooling, this is no longer a bet on an emerging standard. The standard has already won.

What MCP Actually Changes

For years, integrating AI into enterprise systems meant building bespoke connectors: custom code gluing your LLM to your database, your ticketing system, your internal APIs. Every integration was one-off, brittle, and expensive to maintain.

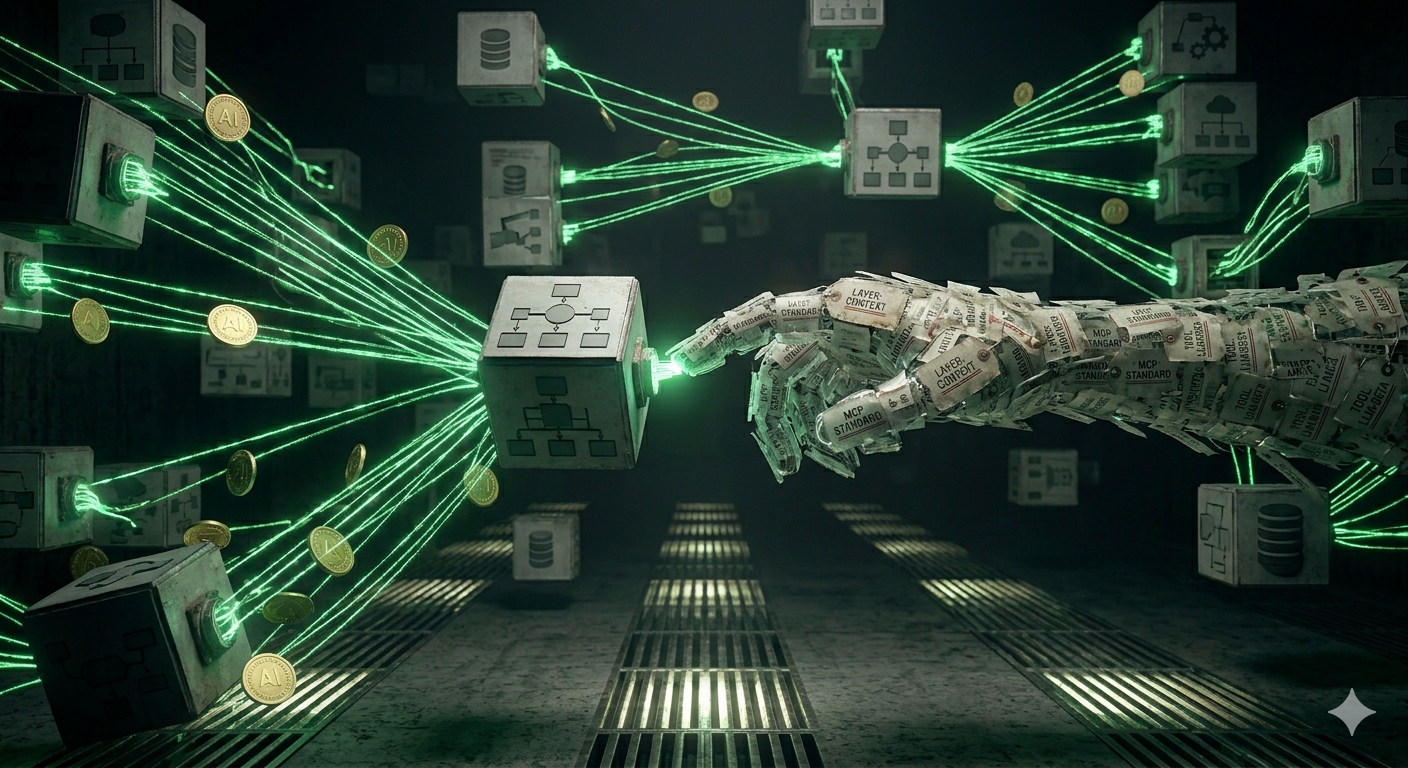

MCP changes the economics of that problem fundamentally. It defines a universal protocol for how AI agents discover and invoke tools, access context, and orchestrate multi-step workflows across heterogeneous systems. Think of it as the USB standard for AI tooling: once your systems are MCP-compliant, any MCP-capable model can interact with them without custom glue code.

The practical implications are significant:

- Composability: Agents built against MCP can chain tools from different vendors without integration work

- Portability: Swap the underlying model (GPT-5, Gemini, Llama 4, Claude) without rewriting your tool layer

- Auditability: MCP's structured call/response pattern creates natural logging points for compliance and observability

- Velocity: Teams building on MCP inherit a growing ecosystem of pre-built connectors instead of building from scratch

The Llama 4 Signal You Shouldn't Miss

Meta's Llama 4 release this week — widely described as "the most important open-source AI release in years" — is directly relevant to the MCP story. Unlike previous generations designed primarily for question-answering, Llama 4 was architecturally designed for agentic operation: planning, multi-step execution, and extended context maintenance across workflows.

That Meta shipped an open-source model with agentic-first design tells you something important: agentic AI is no longer a frontier capability. It's a baseline expectation.

For engineering leaders, this has a strategic implication that's easy to miss. Open-source agentic models mean your competitors don't need enterprise AI budgets to deploy autonomous workflow agents. The moat isn't access to capable models anymore — it's the quality of your tool layer, the richness of your context, and the robustness of your agentic infrastructure. MCP is how you build that layer so it doesn't need to be rebuilt with every model generation.

What Engineering Leaders Should Do Now

The 1,445% surge in multi-agent system inquiries documented by Gartner between Q1 2024 and Q2 2025 isn't theoretical demand — it's organizational appetite that's about to turn into RFPs, headcount requests, and architectural decisions landing on your desk. Here's how to get ahead of it:

Audit your integration layer. Identify which internal services (databases, APIs, internal tools) would benefit from MCP exposure. Prioritize high-frequency, high-value data sources that agents will need to reason over.

Adopt MCP-compatible tooling in your AI stack now. If you're evaluating AI development tools or orchestration frameworks, MCP compatibility should be a non-negotiable criterion — not a nice-to-have.

Design for model agnosticism. The pace of model releases (three frontier models launched in a single week this month) means any model-specific integration is already incurring technical debt. MCP is your hedge.

Build observability into the protocol layer. As agents handle increasingly autonomous workflows, the call graph matters as much as the output. Instrument your MCP layer from day one.

The engineering organizations that will win the next 18 months aren't the ones that deploy the most powerful model. They're the ones with the cleanest, most composable tool infrastructure underneath the model.

Schedule a Technical Architecture Review with our Strategists — we help engineering teams design MCP-first architectures that age well regardless of which model wins next quarter.

Enlightenment Insight

In Guarani cosmology, Kuaray — the Sun — does not merely emit light. It is the organizing force that makes orientation possible: it tells the world which direction is East, when to plant, when to rest, and how all things relate to one another in time. Without the Sun, not just warmth but structure itself disappears.

The Model Context Protocol is emerging as something analogous in the agentic AI landscape. It is not the most powerful model, nor the most sophisticated agent. It is the organizing layer — the protocol that allows all the other pieces to know where they are relative to each other, to communicate without friction, and to act with coherent purpose across a complex system. Just as Kuaray (Sun) does not compete with the things it illuminates but makes their relationship to one another legible, MCP does not replace your tools, your models, or your data — it makes them composable, navigable, and ultimately useful together. Build toward the light.