Google's TurboQuant Just Made Your Inference Bill Obsolete: What Engineering Leaders Need to Know

Google's TurboQuant algorithm achieves 6x memory compression and 8x inference speedup with zero accuracy loss — a fundamental shift in how production AI systems should be architected.

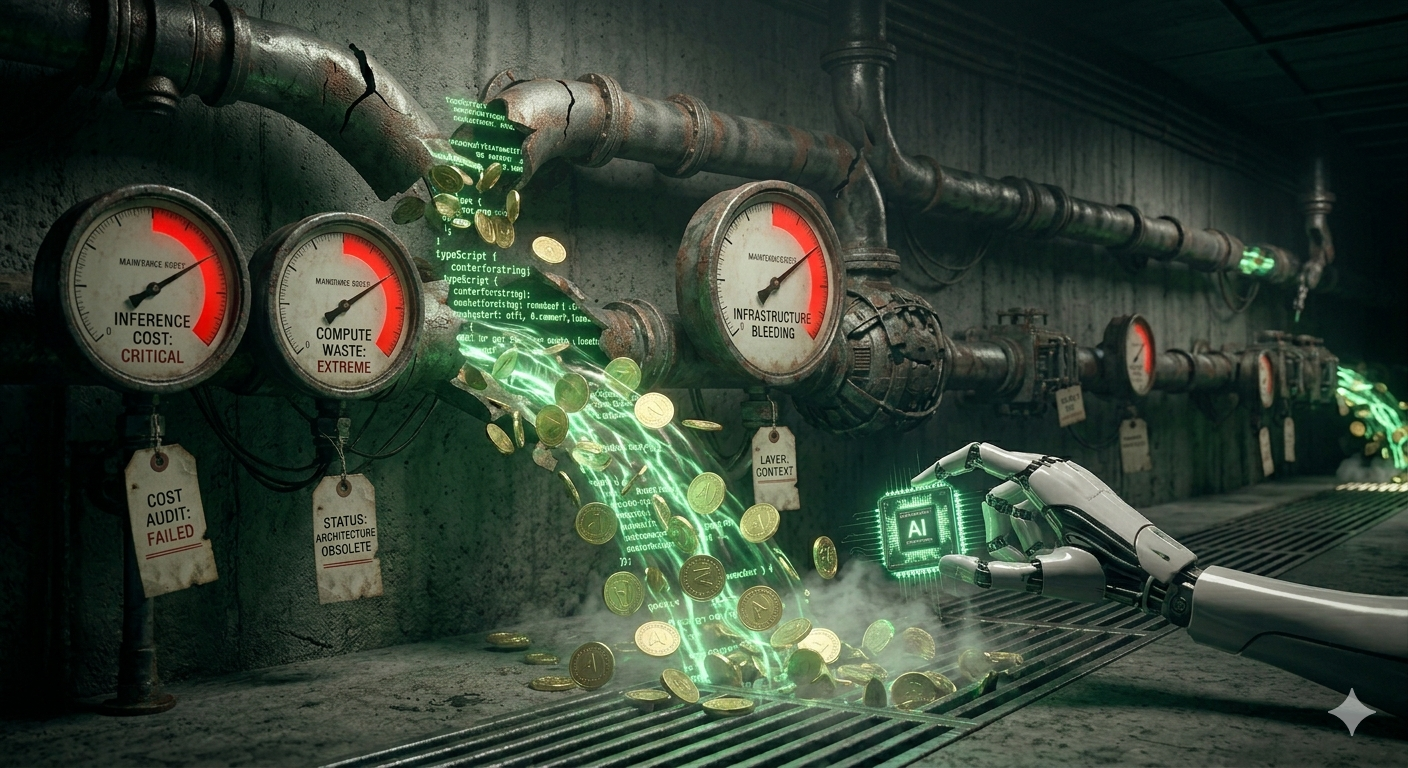

Your LLM Inference Costs Just Became Indefensible

For every dollar you spend running AI in production, the KV cache is silently eating 60–80% of your GPU memory. Every token your model has ever seen in a conversation sits in memory, and as context windows stretch to a million tokens, that cache becomes the single biggest bottleneck in your serving stack. Google just broke that bottleneck wide open. At Kuaray, we believe TurboQuant — presented at ICLR 2026 — is the most consequential inference optimization since Flash Attention, and engineering leaders who ignore it will be overpaying for serving within six months.

What TurboQuant Actually Does

TurboQuant is a KV cache compression algorithm that shrinks the memory footprint of large language model inference by up to 6x and accelerates key computations by up to 8x on H100 GPUs — all with zero accuracy loss. No fine-tuning. No retraining. Drop-in compression.

The technical approach combines two innovations:

- PolarQuant — a vector rotation technique that aligns cached key-value vectors into an optimal coordinate system before quantization, eliminating the distribution skew that destroys accuracy in naive approaches.

- Quantized Johnson-Lindenstrauss (QJL) — a dimensionality-preserving compression method that reduces 32-bit keys down to 3-bit representations while mathematically guaranteeing distance preservation between vectors.

The result: your model's KV cache drops from 32-bit precision to 3–4 bits per element, freeing massive amounts of GPU memory for larger batch sizes, longer contexts, or simply fewer GPUs.

Why This Changes the Production Calculus

The implications for engineering organizations running LLM inference at scale are immediate and concrete:

- Batch size unlocked. With 6x less memory consumed by KV cache, you can serve 6x more concurrent requests per GPU. For latency-sensitive applications, this translates directly to fewer machines and lower cloud bills.

- Million-token contexts become practical. Context windows of 500K–1M tokens have been technically possible but economically brutal. TurboQuant makes long-context serving viable on hardware you already own.

- No retraining tax. Unlike pruning or distillation, TurboQuant is applied at inference time with no model modification. Your existing fine-tuned models benefit immediately.

- Open-source implementations are already shipping. Community implementations on GitHub are available today, meaning your platform team can prototype integration this sprint.

What Engineering Leaders Should Do This Quarter

The competitive window here is narrow. Organizations that integrate KV cache compression into their serving stack now will compound savings as token volumes grow.

- Benchmark your KV cache overhead. Profile your production inference workloads and measure what percentage of GPU memory is consumed by the KV cache. If it's above 50% — and it almost certainly is — TurboQuant is directly applicable.

- Prototype on your heaviest workloads first. Long-context RAG pipelines, multi-turn chat, and document analysis workflows will see the largest gains. Start there.

- Reassess your GPU procurement plan. If you were planning to scale horizontally to handle throughput, TurboQuant may let you achieve the same capacity on your current fleet. Delay that purchase order until you've tested.

- Combine with hardware-level optimizations. TurboQuant stacks with inference-optimized hardware like NVIDIA's upcoming LPU and custom ASICs. The software-hardware efficiency compounding is where the real advantage lives.

Schedule a Technical Architecture Review with our Strategists — we help engineering teams redesign inference pipelines to capture efficiency breakthroughs like TurboQuant before competitors do.

Enlightenment Insight

In Guarani cosmology, Kuaray (Sun) achieves its immense power not through brute force, but through the elegant compression of matter into radiant energy — a star converting mass into light with extraordinary efficiency. TurboQuant mirrors this ancient principle: the most powerful systems are not those that consume the most, but those that transform the least into the most. Where others would add more silicon, more memory, more machines, the wiser path compresses — finding the essential signal within the noise, just as the Sun distills hydrogen into the light that sustains all life. At Kuaray, we believe the next era of AI belongs not to those who scale the loudest, but to those who optimize the deepest.